Scaling Data Pipelines in the Cloud for the AI Era

Cloud batch schedulers were designed to replicate the HPC model, but that static approach doesn't take advantage of the cloud's elasticity, leading to overprovisioned resources, manual tuning, and wasted spend.

We’ve approached this problem from a variety of angles over the past few years, developing Fusion to optimize the interface between schedulers and data, Pipeline Optimization to better predict resourcing requirements for Nextflow, and Snapshots to minimize disruptions while using Spot instances. With each of these approaches we’ve continued to be limited by the underlying scheduler – complicating troubleshooting, limiting visibility, and minimizing our freedom to truly optimize cost performance for bioinformatics.

To solve this, we’re introducing Seqera Intelligent Compute, a new-generation compute service that abstracts away the complexity of scaling data pipelines in the cloud for the AI era. The result is more resource-efficient workflows, reducing the number of instances per run, eliminating unutilized resources, and lowering overall costs. Intelligent Compute achieves levels of optimization that would take teams months of manual tuning to approach and allows teams to scale and run workflows in the most efficient way.

We Need a New Generation of Compute

Scheduling was born alongside HPC clusters with a fixed number of nodes, a known CPU architecture, uniform storage, and a consistent network topology. The scheduler's job was to optimally pack jobs onto this known set of resources. This model worked well on-premise because the hardware didn't change. You bought your machines, racked them, and ran workloads on them for years. The scheduler could be tuned once for that specific configuration.

The first generation of cloud schedulers (AWS Batch, Google Cloud Life Sciences, and Azure Batch) mimicked traditional HPC job schedulers and carried this design philosophy forward. This made sense at the time: organizations were migrating from on-premise clusters to the cloud, and familiar abstractions eased the transition. But these schedulers inherited a fundamental assumption from the HPC world: the static model.

Cloud Scheduling Should be Dynamic

Scheduling in the cloud should be a fundamentally different problem. The assumptions that made static schedulers effective on-premise break down across every dimension: resource capacity, architectures, machine families, spot instances, and configuration. Cloud infrastructure is dynamic, but existing batch schedulers treat it as if it were static, meaning you pay cloud prices while underutilizing resources and not getting true cloud flexibility. Modern bioinformatics deserves compute that adapts to the abundant and dynamic nature of the Cloud, taking advantage of the breadth of architectures and machine types but also building schedules that take into account the nuances of resource availability, current pricing, data residency, and the nuances of analysis being run.

How Seqera Intelligent Compute Solves This

Seqera Intelligent Compute takes a fundamentally new approach: instead of requiring pre-configured static compute environments, it continuously adapts to real-time conditions and optimizes resource selection and allocation at execution time across every batch of tasks. The result is fewer instances, lower costs, and optimal resource utilization at every point in the run. No compute environments to configure, no instance type selections to maintain, no manual cost optimization to perform.

Dynamic task profiling, batching, and bin sizing

When Nextflow submits tasks, Intelligent Compute batches and profiles each group to determine the most efficient machine types available to process them. Instead of one-task-per-instance or coarse-grained allocation, Intelligent Compute packs tasks densely onto right-sized machines, reducing the total number of instances required per run while respecting resource constraints.

Transparent multi-architecture container execution

When Intelligent Compute selects an architecture, say Graviton (ARM64) instance because it offers the best price-performance for a given task, it automatically provides the container image for that architecture with no user intervention required. Workloads run transparently on mixed CPU architectures (AMD and ARM) depending on the most efficient availability at execution time.

Continuous resource intelligence

Intelligent Compute’s scheduling is rooted in sophisticated models designed to predict the actual CPU, memory, disk, and runtime requirements of each task type across runs, not what is requested. However, these models also learn and evolve over time converging toward optimal values automatically.

Not only that, but Seqera maintains a real-time database of all machine types, pricing, and availability across regions and cloud providers. This enables continuous predictions that take into account historical and point-in-time data to fine-tune scheduling with each batch of tasks, throughout the entire execution.

Cross-task data sharing

Fusion has been re-engineered specifically for Intelligent Compute to remove the operational and cost overhead of fetching and catching the same data repeatedly for multiple tasks and enable strategic sharing of data across tasks.

Smart error recovery

Intelligent Compute understands failure modes and responds intelligently:

- →Out-of-memory errors: The task is automatically retried with increased memory allocation — no user intervention, no pipeline-level retry configuration needed.

- →Snapshots: Long-running tasks leverage Fusion Snapshots to resume from checkpoints rather than restarting from scratch, saving both time and compute.

- →Spot preemption: If a task is preempted too many times due to spot unavailability, Intelligent Compute automatically re-executes it on an on-demand instance., ensuring forward progress.

Benchmarks: Seqera Intelligent Compute vs. AWS Batch

We benchmarked Seqera Intelligent Compute against traditional AWS Batch configurations using nf-core/rnaseq. These benchmarks demonstrate the tangible cost and efficiency gains that Intelligent Compute delivers out of the box: more efficient compute, fewer machines deployed, faster results, and cheaper costs.

Less compute, faster results.

We benchmarked nf-core/rnaseq using traditional AWS Batch and Seqera Intelligent Compute. We tested AWS Batch across a number of configurations, including ‘plain’ AWS Batch, AWS Batch with specific 6th generation compute instances pinned, and AWS Batch and Fusion.

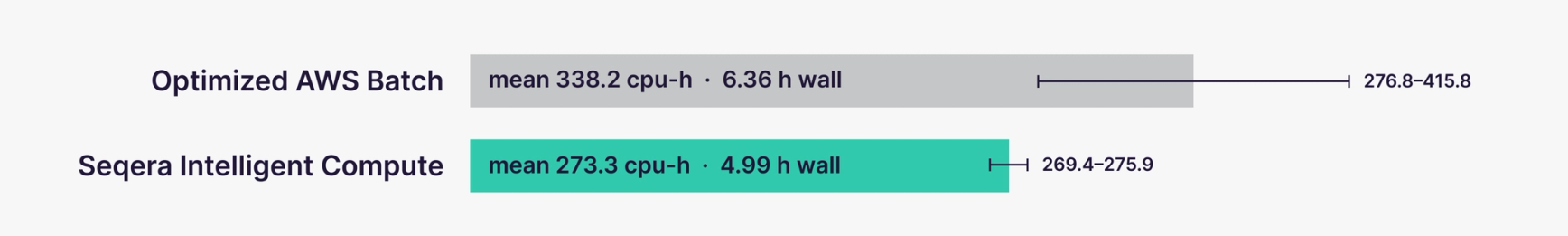

Seqera Intelligent Compute’s more efficient task allocation reduced total CPU hours by approximately 19%, averaging 273 CPU·h compared to 338 CPU·h with optimized AWS Batch. Wall-clock time improved as well, with Intelligent Compute completing runs in roughly 5 h vs 6.5 h for optimized AWS Batch, a 29% reduction in end-to-end turnaround. Compared to standard AWS Batch (on-demand), this was an even bigger reduction of 57% (~11.5h+ compared to 5 h).

Intelligent Compute can deliver both lower resource consumption and faster time-to-result, enabling teams to iterate and scale more quickly and efficiently while reducing per-run cloud spend.

| Per run, aggregated | AWS Batch | Seqera Intelligent Compute | Mean |

|---|---|---|---|

| cpu-h consumed | 338.2 (276.8–415.8) | 273.3 (269.4–275.9) | -19.2% |

| Execution time (h) | 6.36 (3.72–9.30) | 4.99 (4.97–5.02) | -21.5% |

| Throughput · cpu-h / wall-h | 53.2 | 54.8 | +3.0% |

Costs cut by more than half

Beyond performance gains, Intelligent Compute delivered substantial cost savings. Across our benchmarking runs, the mean compute cost dropped by 57% ($34.25 per run with AWS Batch to $14.87 with Intelligent Compute). This saving is driven by two compounding factors: Intelligent Compute uses fewer CPU hours overall (273 vs. 338 CPU·h) by more effectively packing tasks onto compute instances. As a result, each CPU hour costs less ($0.0544 vs. $0.1013 per CPU·h). In other words, Seqera Intelligent Compute’s more efficient task allocation means the pipeline does less work and pays less for every unit of work it does, cutting costs by more than half. Compared to standard AWS Batch (on-demand), cost-savings were even greater at 73% (mean $56 per run vs $14.87).

| Per run, aggregated | AWS Batch | Seqera Intelligent Compute | Mean |

|---|---|---|---|

| Compute cost per run | $34.25 ($29.12–$41.63) | $14.87 ($14.30–$15.82) | -56.6% |

| Compute $ per sample | $4.28 | $1.86 | -56.6% |

| Cost per cpu-h | $0.1013 | $0.0544 | -46.3% |

Join the Private Preview

Seqera Intelligent Compute will be available soon in private preview for AWS, with support for other cloud providers to follow. If you're running Nextflow pipelines on AWS and want to see what continuous compute optimization can do for your team — lower costs, higher efficiency, zero infrastructure tuning — we'd love to hear from you.